The redesigned Drupal.org has been running strong since its launch back in October of 2010. The redesign is a big departure from the previous Drupal.org. It added a new theme and a number of new pages including a customizable user dashboard, implemented a multi-site search, and integrated Drupal.org with its sub-sites. The design and functionality was the result of a massive collaboration between paid contractors, community leaders and hundreds of volunteer community members.

The infrastructure behind drupal.org is always growing and adapting. The redesign provided the infrastructure team with an opportunity to leverage a number of new and existing technologies to keep Drupal.org and the contributors involved running efficiently.

The development process and Hudson

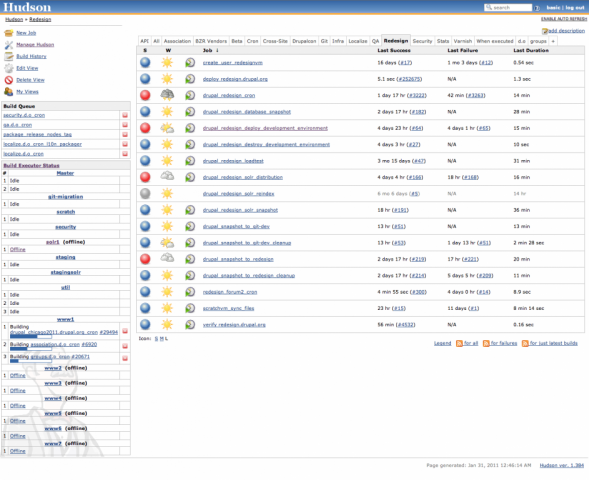

Before the redesign, the use of Hudson was still in its infancy with much of the infrastructure team. Hudson was being used as a replacement for existing cron jobs across the infrastructure, and was beginning to allow us to control and automate more complex routines.

The redesign had a need for individual development environments for the redesign, and no easy way to automate the builds. We created Hudson jobs to take sanitized database snapshots of Drupal.org sites, but we still had no automated way of creating development environments. An automated way to create a sanitized environment reduces the barrier to entry for community members interested in contributing.

We were able to use Hudson to tie together the deployment of an entire development environment . The new Hudson job allowed us to create development environments by entering the name of the environment and a comment describing what the environment was for. The job would handle the rest: create a database and database user, checkout the latest redesign codebase from bazaar, import the latest copy of the sanitized database, configure settings.local.php, and set up a new virtual host in httpd.

Solr and stagingsolr

Solr has become increasingly integrated into Drupal.org. We now have a highly available master/slave Solr cluster built using a combination of Varnish and Tomcat. The cluster efficiently handles searching on all of the Drupal.org sites, and Solr’s ability to scale allows us to index all of the sites in a single Solr core.

Using Hudson allowed us to provide a Solr core that was available for redesign contributors to download and install in their local development environments.

Load testing and tuning

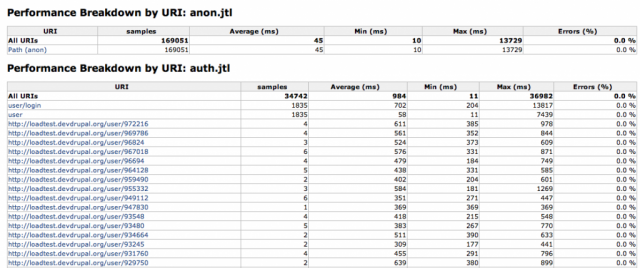

Load testing was one of the most important steps of the redesign. With the number of new features being added to Drupal.org, it was important that the community was able to test and identify problem areas before launch. Beta.drupal.org was provided as a place for the community to test the new functionality and features, recording issues in the Drupal.org redesign issue queue. Using Hudson, we created a loadtest development environment, database and Solr configuration to mimic that of beta.drupal.org. We then added another Hudson job to control running a JMeter load test suite. This job made use of the Performance plugin in Hudson which aggregates data from the load test after completion and creates graphs and tables based on the results.

JMeter was used to test new areas of the site as both authenticated users and anonymously. The test suite was designed to test new areas of the site, including Solr multi-site searching, the homepage, the dashboard, get started pages, download and extend, and module categories. Hudson was used to flush logs in MySQL, and analyze MySQL’s slow log with Maatkit mk-query-digest. The combination of mk-query-digest and the output of the Performance plugin were both available via Hudson’s load test job.

We used these tests to identify the problem areas, and got to work tuning code and SQL queries for optimal performance. As performance improved, testing was moved from the loadtest environment to the production infrastructure. Tests on the production infrastructure were run in the same way with JMeter and Hudson. The devel module played a huge part in profiling slow loading pages as well.

Once all of the problem areas were identified and fixed, we were confident that we had a stable and performant product that was ready for release.

Highly available NFS

The redesign also gave us the opportunity to overhaul one of the remaining single points of failure in the production infrastructure: NFS. NFS is now hosted on a highly available cluster using DRBD and heartbeat. DRBD allows us to easily mirror a block device over the network, commonly described as allowing a RAID1 mirror over a network. DRBD enables us to keep both nodes’ NFS exports in sync. Heartbeat controls a virtual IP address, and if failure is detected on “nfs1”, points the virtual IP to “nfs2” without downtime. The new NFS cluster also gave us a huge increase of available space, with plenty of room to grow.

In The End

We were able to create an automated set of tools that enabled the distributed team of redesign contributors to effectively and efficiently write and test their changes. Many of these tools are still in use, and provide an easy way to create new development environments for anyone interested in working on Drupal.org. After the redesign, the Drupal.org infrastructure is stronger than ever, with a more dependable infrastructure and a number of new tools available for testing and QA.

DRBD: http://www.drbd.org/

Heartbeat: http://www.linux-ha.org/wiki/Heartbeat

Hudson: http://hudson-ci.org/

Hudson performance plugin: http://wiki.hudson-ci.org/display/HUDSON/Performance+Plugin

JMeter: http://jakarta.apache.org/jmeter/

Maatkit: http://www.maatkit.org/

Solr: http://lucene.apache.org/solr/

Varnish: http://www.varnish-cache.org/